Web IR / NLP Group (WING) @ NUS

Latest News

Min was quoted in Science on the value of open, replicable AI for research: It’s very important to put this type of open-source research out there because it is replicable."

Min gave two invited keynotes at AAAI 2026 workshops on 26 and 27 January, entitled “The Research Manifold” and “Top 5 trends to watch in the future of IR research (and why they are irrelevant)”. Both talks are now available to watch.

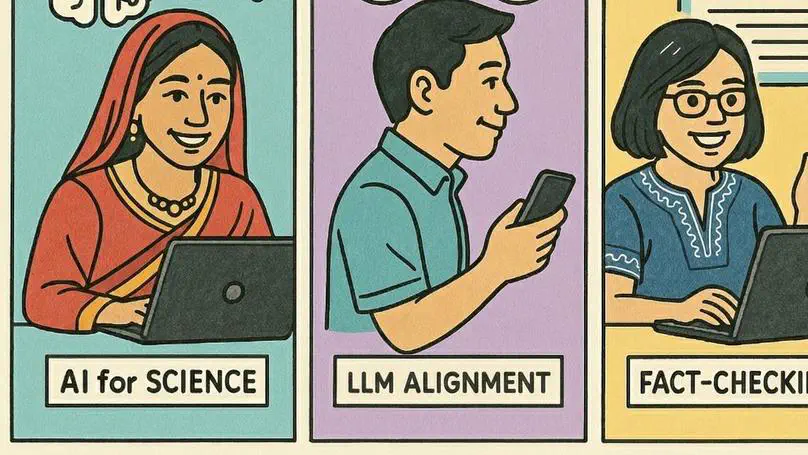

The Web, Information Retrieval, and Natural Language Processing (WING) group at the School of Computing, National University of Singapore is actively seeking postdoctoral scholars and doctoral students to start in 2026 across three cutting-edge research areas: AI for Science, LLM Socioethical Alignment, and Fact Checking. WING is led by Associate Professor Kan Min-Yen.

Jiaying helped to represent WING’s interest in the iGyro information resilience project at the NUS Computing networking session recently at NeurIPS 2025.

Check out 👀 Yisong Miao’s feature as a Vector Institute research intern in the latest 2025 installment of their news cast. Although he wasn’t able to travel to the institute in 🇨🇦 for the immersive physical part, WING is proud that he was able to participate in the programme while staying on the other side of the world!